Agile Management - Complexity Thinking View more presentations from Jurgen Appelo.

How to Deal with Unknown Unknowns

Getting people together to share information and make joint decisions is the best way to deal with the unknown.

Software projects always suffer from limited predictability. And that’s not only because of the many feedback loops inside the system. Another reason is that every team has to face the unknown.

The Incompressibility Principle

”There is no accurate (or rather, perfect) representation of the system which is simpler than the system itself. In building representations of open systems, we are forced to leave things out, and since the effects of these omissions are nonlinear, we cannot predict their magnitude.” – Cilliers, Paul. "Knowing Complex Systems" Richardson K.A. Managing Organizational Complexity: Philosophy, Theory and Application.

From the Incompressibility Principle we can infer there will always be unknowns. There are things unknown about the system itself, and things unknown about the environment.

Known Unknowns

We can distinguish two kinds of unknowns. The known unknowns are the things we know that we don’t know. For example, we know that the customer will sometimes change her mind. But we don’t know when and in which cases. And we know there’s a chance that team members will get sick. We just don’t know who and when. I sometimes refer to these kinds of risks as jokers. We know the jokers are in the deck of cards. We just don’t know which hands will get them.

Unknown Unknowns

The unknown unknowns are the things we don’t know that we don’t know. For example, I was once confronted with a team member who had, quite unexpectedly, developed a psychological disorder. And I once unexpectedly became chairman of an institution, when the other two board members quit one month after I joined the board. I had never dealt with such situations before, and I had not taken these eventualities into account in my decisions. Such events are sometimes called black swans.

The unknown unknowns are the things we don’t know that we don’t know. For example, I was once confronted with a team member who had, quite unexpectedly, developed a psychological disorder. And I once unexpectedly became chairman of an institution, when the other two board members quit one month after I joined the board. I had never dealt with such situations before, and I had not taken these eventualities into account in my decisions. Such events are sometimes called black swans.

Not long ago people could imagine only white swans, because white swans were all they had ever seen. And so people predicted that every next swan they would see would be white. The discovery of black swans shattered this prediction. The black swan is a metaphor for the uselessness of predictions that are based on earlier experiences, in the presence of unknown unknowns. – Also see: Taleb, Nassim. The Black Swan.

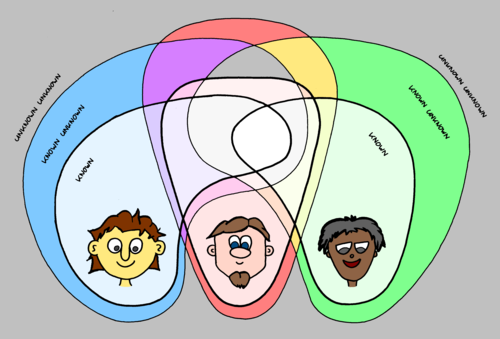

Limited Knowledge

It is important to understand that the unknown always depends on the observer. Some people already knew about black swans, but that didn’t make them any less surprising to those who had never seen them. My fellow board members already knew they wanted to leave their positions a good time before they shocked me with their announcements. And if one of your team members turns out to be a criminal on the FBI’s Top 10 Most Wanted list, he probably knew that long before you found out.

The known unknowns and unknown unknowns in your organization differ from person to person. Getting people together to share information and make joint decisions is the best way to deal with the unknown.

This text is an excerpt from the Agile Management course, available from March 2011 in various countries.